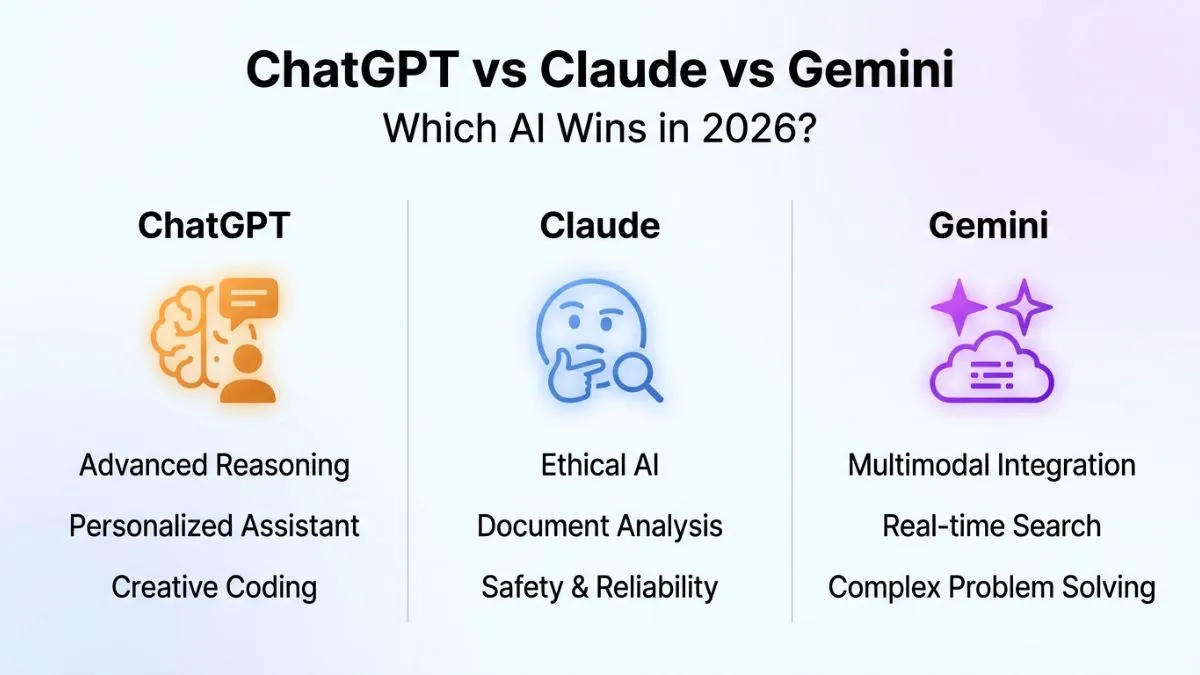

The AI wars of 2026 have officially reached a boiling point. Three titans — Anthropic’s Claude 4, OpenAI’s GPT-5, and Google’s Gemini 2 Ultra — are locked in a battle for dominance that’s reshaping how we work, create, and think. If you’ve been sitting on the fence about which AI assistant to actually pay for, we don’t blame you. The gap between these models has narrowed dramatically, the pricing has gotten surprisingly competitive, and each platform is making bold claims about benchmark supremacy. So we put all three through their paces to give you the real answer: which one is actually worth your money in 2026?

Let’s be clear upfront: there’s no single “best” AI for everyone. A software engineer stress-testing code logic has wildly different needs than a novelist looking for a creative collaborator, or a marketing director trying to automate campaign briefs at scale. What we can do is break down exactly where each model excels, where it stumbles, and how the pricing stacks up against real-world value. We’ve spent weeks running structured prompts, professional tasks, and edge-case experiments so you don’t have to.

By mid-2026, the AI landscape looks almost unrecognizable compared to just two years ago. Context windows have ballooned, multimodal capabilities are now table stakes, and the race to integrate AI natively into enterprise workflows has fundamentally changed what “good” even means. These aren’t chatbots anymore — they’re cognitive infrastructure.

Specs, Pricing, and What You’re Actually Getting

Before we dive into performance, let’s talk numbers. GPT-5, which OpenAI launched in January 2026, is available through ChatGPT Plus at $22/month for consumers, with API access starting at $15 per million input tokens. It ships with a 256K token context window and full native multimodal support covering text, images, audio, and video frames. OpenAI’s Team and Enterprise tiers unlock priority compute and advanced tool use, ranging from $30 to $60 per user/month.

Claude 4 dropped in March 2026 and is arguably the most anticipated release in Anthropic’s history. Claude Pro subscribers pay $20/month, while the API runs at $12 per million input tokens for Claude 4 Sonnet and $18 per million for the flagship Claude 4 Opus. The headline spec here is a jaw-dropping 500K token context window — the largest of the three — making it uniquely suited for processing entire codebases, lengthy legal documents, or book-length manuscripts in a single pass.

Gemini 2 Ultra, available through Google One AI Premium at $19.99/month, ties deeply into the Google ecosystem — think Workspace, YouTube, Search, and Maps — and offers a 200K token context window. API pricing sits at $10 per million input tokens, making it the most affordable at scale. Google has also quietly positioned Gemini 2 Ultra as the go-to for real-time data tasks, thanks to its live Search grounding that none of the competitors have fully matched.

“Context window size stopped being a marketing gimmick around 2025. In 2026, it’s actually the deciding factor for enterprise contracts worth millions of dollars.” — A senior ML engineer at a Fortune 500 company, speaking to PixlRun

Benchmark Performance: Where the Numbers Get Interesting

On the big standardized benchmarks, the picture is more nuanced than any single company’s press release would have you believe. On MMLU Pro (the 2026 standard for general reasoning), GPT-5 leads with a score of 91.4%, followed closely by Claude 4 Opus at 90.8% and Gemini 2 Ultra at 89.6%. These differences are statistically real but practically tiny for most use cases.

Where it gets genuinely interesting is in specialized benchmarks. On HumanEval+ for coding tasks, Claude 4 Opus edges out the competition with 94.2% pass@1, compared to GPT-5’s 93.7% and Gemini 2 Ultra’s 91.1%. Claude’s training methodology, which emphasizes careful step-by-step reasoning and Constitutional AI principles, seems to pay dividends in tasks that require sustained logical precision.

Gemini 2 Ultra, however, blows the other two away on real-time factual accuracy. Because it can ground responses in live Google Search results, it virtually eliminates hallucinations on current-events queries — an area where both GPT-5 and Claude 4 still stumble meaningfully when pushed outside their training cutoffs. For journalists, researchers, and anyone dealing with rapidly changing information, this is not a small advantage.

- Best for coding: Claude 4 Opus (94.2% HumanEval+)

- Best for general reasoning: GPT-5 (91.4% MMLU Pro)

- Best for real-time accuracy: Gemini 2 Ultra (live Search grounding)

- Largest context window: Claude 4 (500K tokens)

- Most affordable API: Gemini 2 Ultra ($10/million input tokens)

Real-World Use Cases: Writing, Coding, and Creative Work

Benchmarks only tell part of the story. In actual day-to-day use, personality and output style matter enormously. GPT-5 remains the most versatile generalist — it’s fluent, fast, and remarkably good at picking up implicit context. Ask it to write a punchy product description, debug a React component, summarize a 50-page report, or brainstorm naming ideas, and it handles all of them with minimal friction. The interface through ChatGPT is also the most polished consumer experience of the three, with memory features, custom GPTs, and DALL-E 4 integration baked in.

Claude 4 has a noticeably different character. Its responses feel more careful, more nuanced — sometimes to a fault if you need something quick and punchy. But for long-form writing, technical documentation, complex research synthesis, or any task where you really don’t want the model to cut corners, Claude 4 Opus is our consistent favorite. Anthropic has also doubled down on transparency, giving users clearer explanations of why Claude declines certain requests. It doesn’t feel as arbitrary or frustrating as some competitors’ guardrails can.

Gemini 2 Ultra is the one to watch if you live inside Google’s ecosystem. Tight integration with Gmail, Docs, Meet, and Workspace makes it a productivity multiplier for teams already committed to Google’s stack. Its multimodal vision capabilities have also matured significantly — analyzing charts, reading handwritten notes, and processing complex diagrams felt noticeably more reliable than in earlier generations. The trade-off is that outside the Google ecosystem, it sometimes feels like a product that’s still finding its footing as a standalone assistant.

“I switched our entire content team to Claude 4 for long-form drafting and kept Gemini for research because it pulls live data. GPT-5 became my go-to for client presentations. Turns out you don’t have to pick just one.”

The Bottom Line

Here’s the honest verdict: none of these models is definitively, universally “the best” in 2026 — and anyone who tells you otherwise is either oversimplifying or selling something. What we can say with confidence is this:

- Choose GPT-5 if you want the most well-rounded, consumer-friendly AI with the broadest feature ecosystem and strong general performance across nearly every task type.

- Choose Claude 4 Opus if you’re doing heavy lifting — long documents, complex coding, nuanced writing — and you value precision and a thoughtful, reliable voice over raw speed.

- Choose Gemini 2 Ultra if real-time accuracy matters, you’re embedded in the Google ecosystem, or you’re watching your API costs and need enterprise-scale deployment without breaking the bank.

The most sophisticated power users in 2026 aren’t picking one AI and going all-in — they’re routing different tasks to different models based on what each does best. Tools like multi-model orchestration platforms are making this easier every month, and at these price points, running two or even three subscriptions simultaneously is a genuinely reasonable choice for professionals.

The real winner of the AI race in 2026? The people who learn how to use these tools strategically. The model you’ll get the most out of is almost always the one you understand best — so pick one, go deep, and build from there.