When OpenAI dropped GPT-5 in early 2025, the AI world collectively held its breath. After months of hype, leaked benchmarks, and the usual parade of breathless predictions, the question everyone actually wanted answered was refreshingly simple: is GPT-5 genuinely smarter than GPT-4, or is this another incremental upgrade dressed up in a marketing tuxedo? Having spent considerable time putting GPT-5 through its paces — from complex reasoning tasks and multimodal challenges to real-world coding sessions and creative writing sprints — we’re ready to give you the honest breakdown. Spoiler: it’s more complicated than a simple yes or no.

GPT-5 officially became available to ChatGPT Plus subscribers (at $20/month) in May 2025, with API access rolling out to developers at a starting rate of $15 per million input tokens and $60 per million output tokens — a significant jump from GPT-4 Turbo’s pricing but one OpenAI argues is justified by the performance ceiling it shatters. By the time we hit Q1 2026, the model had already become the backbone of hundreds of enterprise deployments, with OpenAI reporting over 500 million weekly active users across its platform. That kind of adoption rate doesn’t happen by accident.

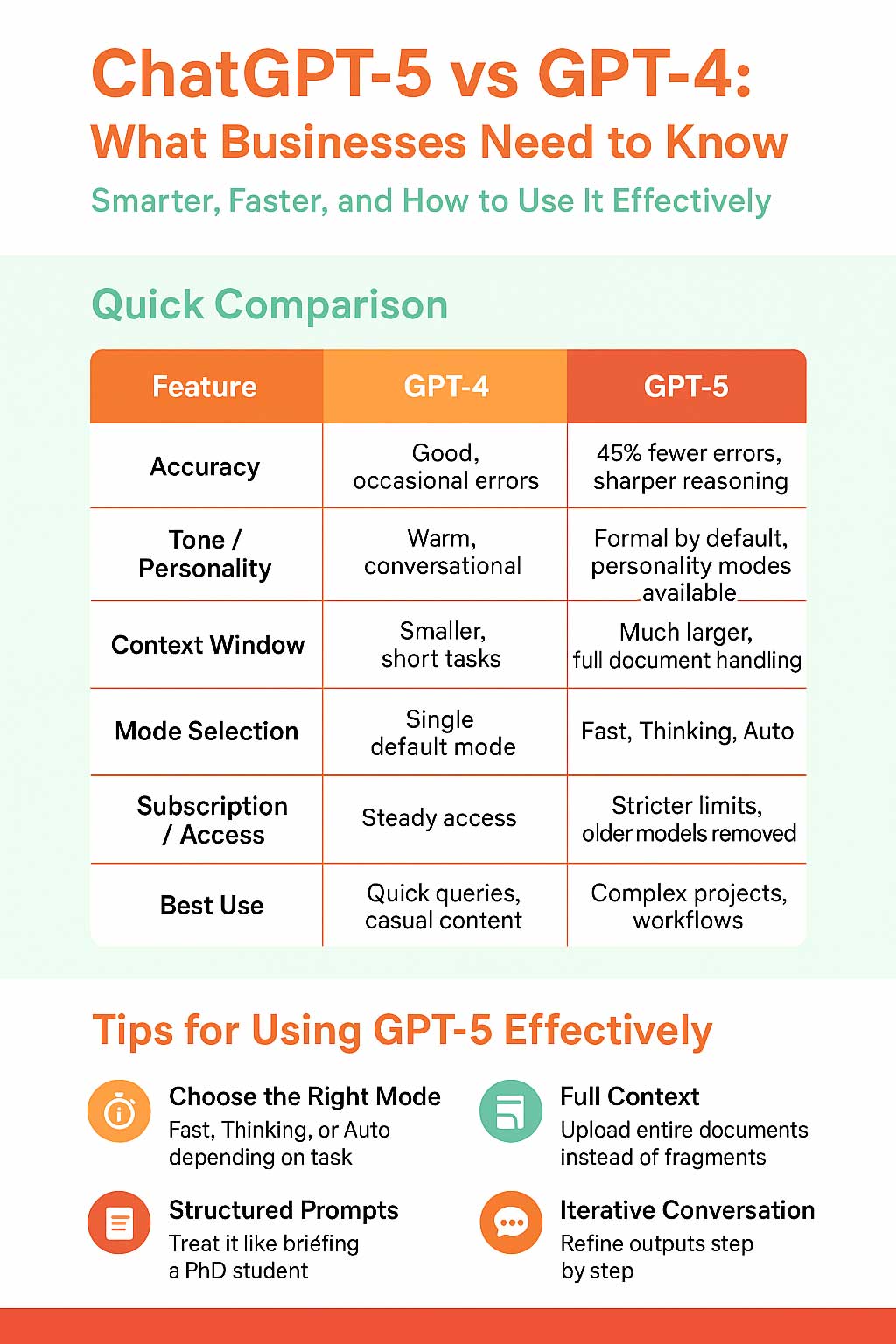

What makes this comparison especially fascinating is the baseline we’re working against. GPT-4, launched in March 2023, was itself a generational leap. It had already redefined what people expected from AI assistants. So asking whether GPT-5 is “smarter” isn’t just about raw benchmark numbers — it’s about whether the improvements actually translate to your daily workflow, your creative projects, your dev environment, and your business needs.

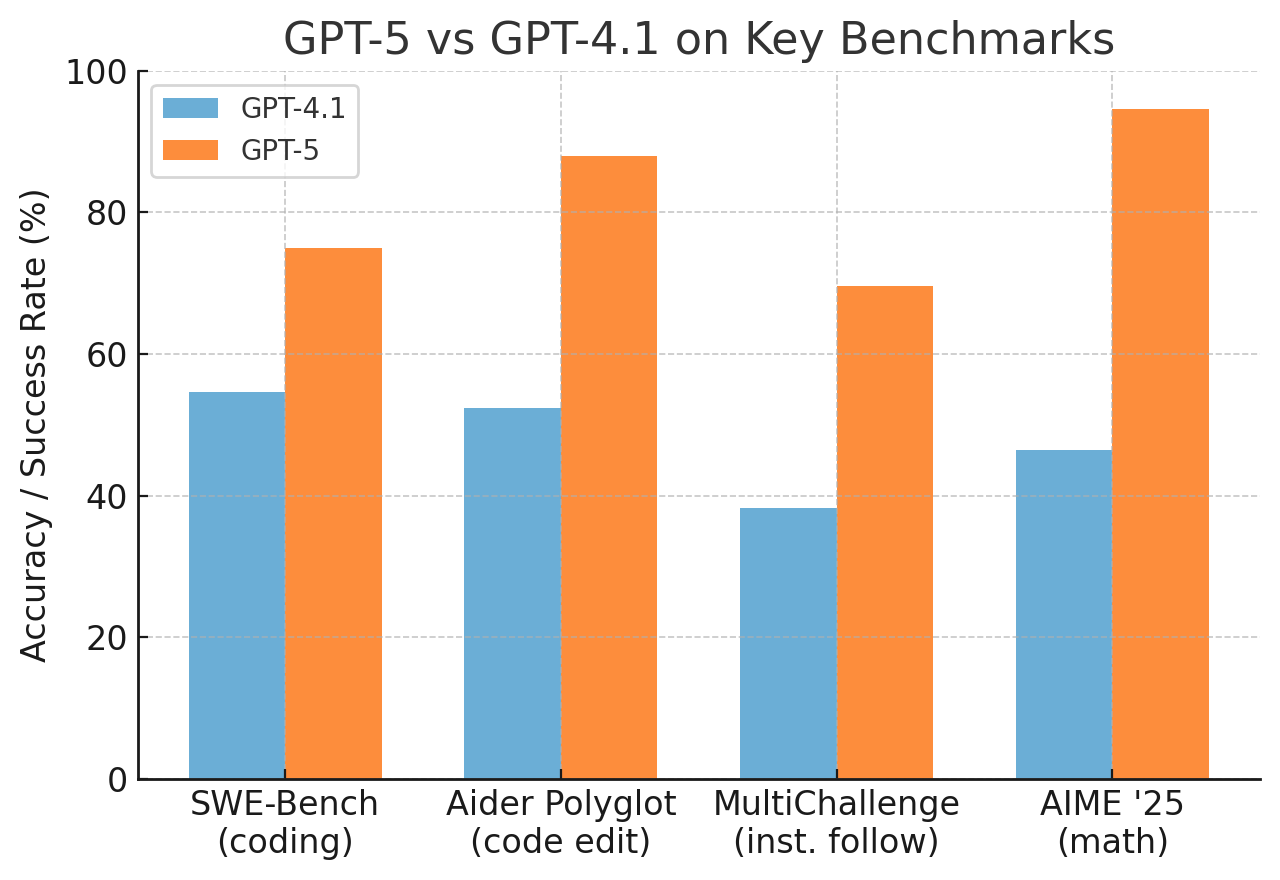

Benchmarks and Raw Performance: The Numbers Tell a Story

Let’s start with the cold, hard data. On MMLU (Massive Multitask Language Understanding), GPT-5 scores an impressive 91.2% compared to GPT-4’s already-strong 86.4%. On the HumanEval coding benchmark, GPT-5 hits 94.4% versus GPT-4’s 67% — that’s not a marginal improvement, that’s a seismic one. For math reasoning using the MATH dataset, GPT-5 achieves 79.6% accuracy, compared to GPT-4’s 52.9%. These aren’t cherry-picked numbers. Across the board, GPT-5 consistently outperforms its predecessor by margins that would have seemed impossible just two years ago.

Context window is another arena where GPT-5 flexes hard. The model ships with a native 128,000 token context window, with enterprise tiers supporting up to 1 million tokens — which in practical terms means you can feed it entire codebases, lengthy legal documents, or a full novel and it won’t start forgetting what it read three chapters ago. GPT-4 Turbo offered 128K tokens at its peak, but GPT-5’s handling of that extended context is measurably more coherent. Long-form memory degradation, a persistent frustration with earlier models, has been dramatically reduced.

“GPT-5 isn’t just incrementally better — it’s the first model that made me genuinely rethink how I structure my entire research workflow.” — a sentiment echoed across developer forums throughout late 2025.

Response latency has also improved. In standard API configurations, GPT-5 averages around 1.8 seconds for a typical 500-token response, compared to GPT-4 Turbo’s average of 2.4 seconds in similar conditions. In the 2026 AI landscape where real-time interaction is table stakes, that 25% speed improvement compounds into a meaningfully different user experience over hours of use.

Reasoning and Real-World Intelligence: Where It Actually Matters

Benchmarks are one thing. But the real test is how GPT-5 handles the messy, ambiguous, genuinely hard problems that don’t have clean answers. And here’s where things get interesting. GPT-5 demonstrates a noticeably stronger ability to hold multi-step logical chains together without losing the thread. Ask it to analyze a complex business strategy, weigh competing ethical frameworks, or debug a sprawling Python project with interdependent modules, and you’ll see the difference almost immediately.

One area where the improvement is stark is instruction following. GPT-4 had a frustrating tendency to drift — you’d ask for a 300-word summary and get 600 words, or request output in a specific format only to watch the model improvise its own structure. GPT-5 follows instructions with a precision that feels almost surgical. In internal OpenAI evals, GPT-5 scored 38% higher on complex instruction-following tasks than GPT-4. In day-to-day use, this translates to fewer correction loops and less prompt engineering gymnastics.

Multimodal capabilities have also taken a serious step forward. GPT-5’s vision understanding isn’t just better at identifying objects in images — it can interpret charts, read handwritten notes, analyze UI screenshots for accessibility issues, and even reason about spatial relationships in photographs with a depth that GPT-4’s vision mode simply couldn’t match. For product teams, designers, and researchers, this isn’t a nice-to-have. It’s a genuine workflow transformation.

- Code generation: GPT-5 writes production-quality code in over 30 languages with far fewer logical errors and better adherence to modern best practices

- Mathematical reasoning: Multi-step calculus, proof-writing, and statistical analysis are dramatically more reliable

- Multilingual performance: Significant improvements in low-resource languages, with support expanding to over 50 languages at high fluency

- Creativity and tone control: More nuanced emotional range in creative writing with better adherence to specified style guides

- Safety and refusals: More contextually aware refusals — fewer false positives, better handling of edge cases

The 2026 Competitive Landscape: Does GPT-5 Still Lead the Pack?

Here’s the honest context that a lot of GPT-5 reviews written in 2025 couldn’t fully account for: the AI market in early 2026 is ruthlessly competitive. Google’s Gemini Ultra 2.0, Anthropic’s Claude 4, and Meta’s Llama 4 405B have all launched or significantly updated since GPT-5’s debut. The playing field has leveled in ways that matter. On many reasoning and coding benchmarks, Claude 4 and Gemini Ultra 2.0 are trading blows with GPT-5 within statistical margins of error.

What GPT-5 retains, however, is the ecosystem advantage. ChatGPT’s integration depth — with Microsoft 365 Copilot, Azure OpenAI services, the GPT Store (which crossed 3 million custom GPTs by January 2026), and third-party developer tools — creates a compounding network effect that raw benchmark superiority alone can’t replicate. If you’re already embedded in the OpenAI ecosystem, GPT-5 is a no-brainer upgrade. If you’re evaluating from scratch, the calculus is genuinely trickier.

Pricing remains a genuine concern for indie developers and small teams. At $60 per million output tokens, GPT-5 API costs can spiral quickly in production environments that require verbose responses. By comparison, GPT-4o remains available at $5 per million output tokens, and for many use cases — customer service bots, basic content generation, straightforward summarization — GPT-4o still delivers exceptional value per dollar. GPT-5 is a sports car; you don’t always need a sports car to get to the grocery store.

The Verdict

So, is GPT-5 actually smarter than GPT-4? Yes, unambiguously and in ways that matter. The benchmark improvements are real, the reasoning upgrades are tangible in everyday use, and the multimodal capabilities represent a genuine qualitative leap rather than a spec sheet bullet point. For professionals — developers, researchers, writers, analysts — who push AI assistants to their limits daily, GPT-5 is a meaningful upgrade that earns its premium pricing.

The caveats are real too. The API pricing puts serious GPT-5 usage out of reach for budget-conscious builders without careful optimization. And in a 2026 market where Claude 4 and Gemini Ultra 2.0 are nipping at its heels, GPT-5’s throne is contested rather than unchallenged. But if you’re a ChatGPT Plus subscriber, you’re already getting access to GPT-5 at no additional cost — and that alone makes it one of the best value propositions in consumer AI today.

The bottom line: GPT-5 is the most capable general-purpose AI model most people will ever need — and for now, it remains the gold standard against which every competitor is measured.